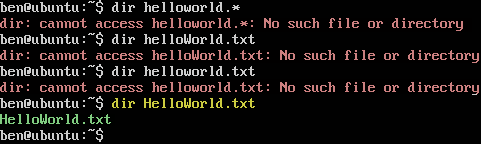

MySQL lower/UPPER casing

and ISO 8859, Unicode, UTF-8, UTF-16, UTF-32 Character Set Collations

7 minutes

Tip using Ko-fi or Buy Me a Coffee

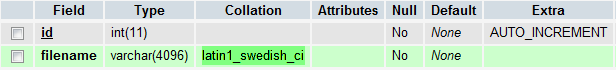

By default MySQL uses the collation of latin1_swedish_ci character set for all columns. First off in case you are wondering the ci after the second underscore means case insensitive. All SQL queries targeting this column will ignore the letter case of the query values and the field data. Other collations that end with cs are case sensitive, which in everyday use is often more of a hindrance. That is unless you are needing to store the names of pointers to data held on case-sensitive file systems such as those used by Unix and Linux.

According to the official documentation latin1_swedish is the MySQL equivalent of the Windows CP1252 character set. For those of you who do not know Windows CP1252 character set is basically the same as the universal ISO 8859-1 / Latin1 character set.

If the above paragraph meant very little to you, a character set or character encoding scheme is a means of representing alpha-numeric and punctuation characters in an alternative form. The character A encoded in the Morse code character set is represented by a dot and dash.

The same character A as a capital in the 1963 ASCII character encoding scheme is represented by the hexadecimal 0041, the decimal 65 and the octal of 101. More importantly it also states that the character capital A is represented as the 7-bit binary code 100 0001. The basis of this 1963 uniformity is still adhered to today and allows ASCII text documents written on ancient computers to be usable on modern machines. It permits this text file http://www.textfiles.com/apple/parameters.txt from 1982 which was written on an Apple II home computer to be read in a modern web browser. B.t.w. the odd formatting in the text file is due to the Apple II’s archaic capability of only handling upper-casing letters with 40 character strings per line.

So back to the latin1_swedish, CP1252 and ISO 8859-1 character sets which I should add are for the most part interchangeable. They are sets of numeric codes (remember how we said A is represented as 41 hexadecimal or 65 decimal) which can handle the majority of characters needed by Western European languages that use the Latin alphabet. So French, Spanish, Italian and English are in but the Greek alphabet is not. You can find a list of all the characters that make up the ISO 8859-1 character set with their numeric, HTML and binary codes on ascii-code.com.

Lets go through some of these acronyms and technical terms.

- ASCII (American Standard Code for Information Interchange) is an early computing standard that was developed by the Washington based American Standards Association to exclusively handle English data communication between different computers and telecommunication equipment.

- ANSI (American National Standards Institute) escape codes were formalised in 1976 as an extension of ASCII to include colour, formatting and controls for computer display terminals (display monitors). Confusingly ANSI escape codes are also known as ECMA-48 and as ISO 6429. Former Microsoft DOS users may remember the need for the ANSI.SYS driver to handle ANSI documents.

- The ISO 8859 (International Organisation for Standardisation) is a mid-1980s European standard with a similar purpose to ASCII except that it introduced additional character sets to handle non-English languages.

- While CP1252 (Code Page) is part of a Microsoft developed guideline designed for use in the Windows operating system. Non-standard compliant code pages were initially developed by IBM as CP437 to be a collection of graphical glyphs for use on their first personal computer, the 1981 IBM-PC in the US market. Other vendors later modified or created new code pages for various non-US regions.

The ISO 8859 and Code Page series of 8-bit (1 byte) character sets were only designed to handle 256 unique characters. They had to include upper and lower casing for each character, separate characters for accents, as well as control codes for new lines, tabs, spaces, printing etc. This size limitation meant different languages and their derivatives need their own unique 8-bit character sets. It is why there are numerous legacy sets and character schemes out in the wild including 16 different parts to ISO 8859.

Unfortunately these different sets are not interchangeable outside the basic ASCII English alpha-numeric and punctuation characters. As the sets generally assign different characters to the same identifiers. So someone in the Baltic (ISO 8859-4) reading a document written in the UK (ISO 8859-1) would see £10 displayed as Ŗ10. In ISO 8859 both £ and Ŗ share the same hexadecimal of 00A3, decimal 163, octal of 243, binary of 10100011. It means you can never have both these characters displayed together in the same document when using ISO 8859.

The solution to this glaring issue was to create a new character encoding set without the inherit 8-bit / 1 byte, 256 character limit. One that could handle all characters in a unified setup without the need to switch between different groupings of characters. The solution was the Universal Character Set but more commonly referred to as Unicode. Unicode solves all these problems with a simple and fairly obvious solution. It assigns a unique code point to every character it recognises and supports. Capital A is given U+0041, the pound sign £ is always U+00A3 and capital R with cedilla Ŗ is guaranteed to be U+0156.

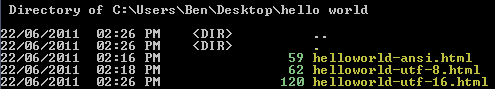

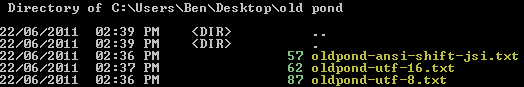

These code points are converted into binary using the Unicode Transformation Format character encoding schemes. There are a number of these schemes including UTF-16 and UTF-32 but the most common is UTF-8 due to its popularity on the Internet. It partly won acceptance due to its backwards compatibility with the original ASCII standard and its variable-width encoding. Which makes it size/bandwidth friendly for many Western European texts in addition to the majority of Internet scripting and programming languages which are based on English.

The ASCII compatibility means that the 8-bit (1 byte) ASCII binary representation for capital A 01000001 is also the same UTF-8 binary representation for the code point U+0041 (capital A in Unicode). The first 127 code points in UTF-8 are all 1 byte in size. The following 1920 points are 2 bytes, then the next 65,346 are 3 bytes while the remaining 1million+ are 4 bytes. Which means both Unicode and UTF-8 can represent over 1 million unique characters yet most Latin-1 characters remain 1 byte in size.

In contrast UTF-32 which is a fix-width encoder requires 32-bits or 4 bytes of binary data for all code points including our example capital letter A (00000000 00000000 00000000 01000001). In UTF-16 the capital A in binary is 00000000 01000001, so it requires 2 bytes but there are many other code points that require a pair of 16-bit or 2 byte values making them 4 bytes in size.

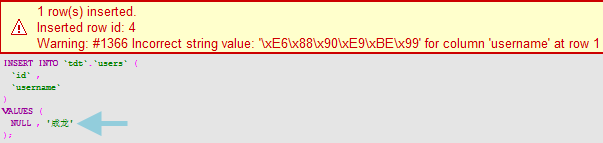

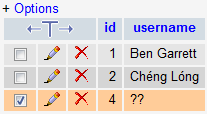

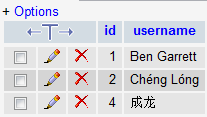

My advise as to which collation you should choose for your data depends on the column’s purpose. If potentially a group of international users are going to be submitting data such as their personal details, addresses, names, signatures etc. to your system then you should at least use UTF-8 to store that data.

On the other hand if you’re going to be keeping the data submission in-house or you know that you are not going to encounter any non Western Latin-1 characters then you should be okay sticking to the MySQL’s defaults.

Alternatively another good use for UTF-8 is where you are pulling data off the Internet and saving it locally to your MySQL database. With data fetched from website HTTP headers, RSS feeds or interactions with 3rd party API’s. You never know what data your application may run into so your system and database should be prepared for anything. But remember that each step of your setup needs to fully support UTF-8, for example …

HTML form text input encoded as UTF-8 → PHP data processing in UTF-8 → MySQL UTF-8 storage.

Additional links.

- Character Sets and Collations That MySQL Supports

- Conversion Table – Decimal, Hexadecimal, Octal, Binary

- Graphemica

- Binary Convert

- Unicode FAQ

- ANSI Character Set

- ASCII Character Set

Written by Ben Garrett